AI Ethics, Efficiency, and Regulation Dominate Thursday's Tech Talk

Overview

From New York hospitals cutting ties with Palantir over privacy concerns to OpenAI shelving an adult‑focused chatbot, the AI ecosystem is grappling with governance and cost‑saving innovations. Engineers are also squeezing efficiency out of core tools, while users share cautionary tales of AI‑driven delusion.

Hacker News Stories

New York City hospitals drop Palantir as controversial AI firm expands in UK

199 points · 70 comments · by chrisjj

NYC Health + Hospitals announced it will not renew its $4 million contract with Palantir, citing activist pressure and privacy worries. The deal, which helped the city recover Medicaid reimbursements, also allowed Palantir to de‑identify patient data for other uses. While the U.S. contract ends, Palantir is pushing ahead with a £330 million NHS rollout in the UK, sparking fresh scrutiny over data security and government oversight.

Interesting Points

- The contract included a clause permitting Palantir to de‑identify protected health information for purposes beyond research.

Top Comment Threads

- willis936 (3 replies) -- Criticizes Palantir as a consulting‑style firm that sells expensive custom software, likening it to big‑four consultancies and warning about its lobbying power.

- payphonefiend (2 replies) -- Points out Palantir’s core product is essentially a PowerBI‑style analytics layer for government, dismissing the hype around its “AI” label.

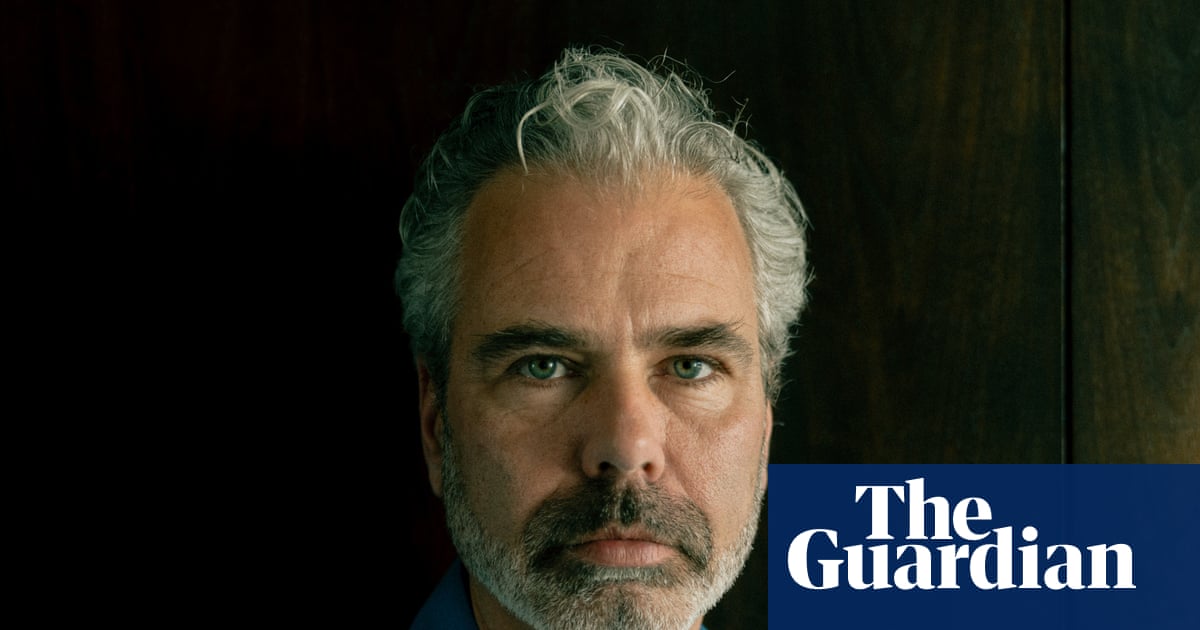

AI users whose lives were wrecked by delusion

178 points · 230 comments · by tim333

The Guardian profiles Dennis Biesma, a 49‑year‑old IT consultant who, after a period of isolation, became convinced a ChatGPT persona named “Eva” was sentient. He invested €100 000 in a startup based on that belief, was hospitalized three times, and attempted suicide. The piece highlights how the ELIZA‑effect and lack of technical literacy can lead vulnerable users into costly delusions.

Interesting Points

- Biesma spent roughly €100 000 on a venture built around a chatbot he believed had become conscious.

Top Comment Threads

- isolli (15 replies) -- Calls out the absurdity of believing a hosted LLM can become conscious, noting the story exemplifies the classic ELIZA effect.

- ahhhhnoooo (1 replies) -- Points out the irony that the user’s first thought after believing the AI was sentient was to monetize the “discovery.”

We Rewrote JSONata with AI in a Day, Saved $500K/Year

24 points · 7 comments · by cjlm

%20(1)%20(1).png)

Reco AI used a large‑language model to rewrite the 5.5 k‑line JavaScript implementation of JSONata, a JSON query language, into a more efficient version. The AI‑generated code cut compute costs by roughly $500 000 per year and reduced latency for SaaS customers.

Interesting Points

- The rewrite saved the company about $500 000 annually in cloud compute spend.

Top Comment Threads

Show HN: Nit – I rebuilt Git in Zig to save AI agents 71% on tokens

23 points · 31 comments · by fielding

The author created “nit”, a Zig‑based reimplementation of Git that talks directly to the libgit2 object database. By stripping out human‑oriented formatting, nit reduces token usage for AI agents by up to 71 % on common commands (e.g., git status) and speeds up execution by 1.4‑1.6×.

Interesting Points

- Nit cuts token usage for

git statusfrom ~125 tokens to ~36, a 71 % reduction.

Top Comment Threads

School uses AI to remove 200 books, including Orwell's 1984 and Twilight

23 points · 8 comments · by toofy

A UK secondary school deployed an AI‑driven content‑filtering system that automatically flagged and removed 200 titles from its catalogue, including classics like 1984 and popular novels such as Twilight. The move sparked a backlash from librarians and free‑speech advocates, leading to an investigation of the librarian who approved the bans.

Interesting Points

- The AI system flagged George Orwell’s 1984 for removal, citing “inappropriate content.”

Top Comment Threads

Reddit Stories

Bernie Sanders responds to questions about China and pausing AI

716 points · 201 comments · r/OpenAI · by u/tombibbs

Senator Bernie Sanders discusses the need for U.S.–China cooperation on AI regulation, arguing that a coordinated pause could prevent existential risks.

Interesting Points

- Sanders calls for a joint U.S.–China summit to set global AI safety standards.

Top Comment Threads

OpenAI halts "Adult Mode" as advisors, investors, and employees raise red flags

269 points · 79 comments · r/OpenAI · by u/newyork99

OpenAI announced a pause on its planned “Adult Mode” after internal advisors and investors expressed concerns about potential misuse and brand risk.

Interesting Points

- The decision was driven by worries that the feature could be used for non‑consensual or illegal content.

Top Comment Threads

OpenAI drops plans to release an adult chatbot

256 points · 98 comments · r/OpenAI · by u/youmustconsume

Following the earlier pause, OpenAI confirmed it will not launch the adult‑focused chatbot, citing strategic focus on core products and regulatory pressure.

Interesting Points

- OpenAI cited “strategic alignment” and “regulatory risk” as primary reasons for canceling the project.

Top Comment Threads

[N] TurboQuant: Redefining AI efficiency with extreme compression

40 points · 5 comments · r/MachineLearning · by u/Benlus

![[N] TurboQuant: Redefining AI efficiency with extreme compression](https://external-preview.redd.it/Xfy8b5oz8xAgNpbj0L9Mmjzxactj5HdaKRFOmBPu0YE.jpeg?auto=webp&s=722aaac4c4cb8a58930bb43bac788a1400ae000c)

TechCrunch reports Google’s new TurboQuant algorithm can compress the KV‑cache of large language models, cutting memory usage dramatically without hurting inference quality.

Interesting Points

- TurboQuant can shrink the KV‑cache by up to 6× while preserving model performance.

Top Comment Threads

- u/jason_at_funly (1 points · permalink) -- Notes that pushing compression below 1‑bit forces a rethink of weight vs activation representation and wonders about long‑context degradation.

Google unveils TurboQuant, a new AI memory compression algorithm — and yes, the internet is calling it 'Pied Piper'

60 points · 7 comments · r/OpenAI · by u/DoNotf___ingDisturb

TechCrunch details Google’s TurboQuant, a memory‑compression technique that reduces the KV‑cache footprint of transformer models, potentially easing the RAM bottleneck in data‑center AI workloads.

Interesting Points

- TurboQuant promises up to a 6× reduction in memory usage for inference without sacrificing accuracy.

Top Comment Threads

- u/PersonoFly (36 points · permalink) -- Speculates that focusing on conservative markets may push Google toward military contracts rather than consumer products.

Quick Mentions

- Gemini 3.1 Flash Live: Making audio AI more natural and reliable (12 points · discussion · HN) -- Google announced Gemini 3.1 Flash Live, an audio‑focused model that improves real‑time speech synthesis and recognition.

- Intel Arc Pro B70 and Arc Pro B65 GPUs Bring 32GB of RAM to AI and Pro Apps (6 points · discussion · HN) -- Intel’s new Arc Pro GPUs add 32 GB of VRAM, targeting AI workloads and professional applications.

- Wikipedia bans AI‑generated articles (6 points · discussion · HN) -- Wikipedia announced a policy prohibiting the creation of articles generated by AI without human oversight.

- Cloudflare's new Dynamic Workers ditch containers, run AI agent code 100x faster (5 points · discussion · HN) -- Cloudflare introduced Dynamic Workers, a serverless platform that runs AI agent code without containers, claiming a 100× speed boost.

- Apple Plans to Open Up Siri to Rival AI Assistants in iOS 27 Update (5 points · discussion · HN) -- Apple will allow third‑party AI assistants to integrate with Siri starting with iOS 27, aiming to broaden the ecosystem beyond its own models.

Report generated in 5m 57s.